I still remember the first time I explored the procedurally generated world of Minecraft back in 2011. Walking over a hill to discover a massive cave system, I knew no developer had manually placed that, so that no other player might ever see it in the same way, felt genuinely magical. That experience sparked my fascination with how games create endless, believable landscapes without armies of artists sculpting every rock and tree.

Today, AI terrain generation has evolved far beyond simple randomization. It’s become a sophisticated blend of mathematics, creativity, and machine learning that’s fundamentally changing how we build and experience game worlds.

What Actually Is AI Terrain Generation?

At its core, terrain generation uses algorithms and sets of mathematical rules to create game environments automatically rather than by hand. While “AI” gets thrown around loosely here, we’re really talking about several approaches: classic procedural generation using noise functions, rule based systems, and increasingly, machine learning models trained on real world geographical data or artist-created content.

The practical difference is enormous. A talented environment artist might spend weeks creating a few square kilometers of detailed landscape. A well designed generation system can produce thousands of square kilometers in minutes, each area unique yet coherent.

The Building Blocks: How It Actually Works

Most terrain generation starts with noise functions, particularly Perlin noise, created by Ken Perlin in the 1980s. Think of it as controlled randomness. Pure random numbers create television static; Perlin noise creates flowing, natural-looking patterns that mimic the organic irregularity we see in nature.

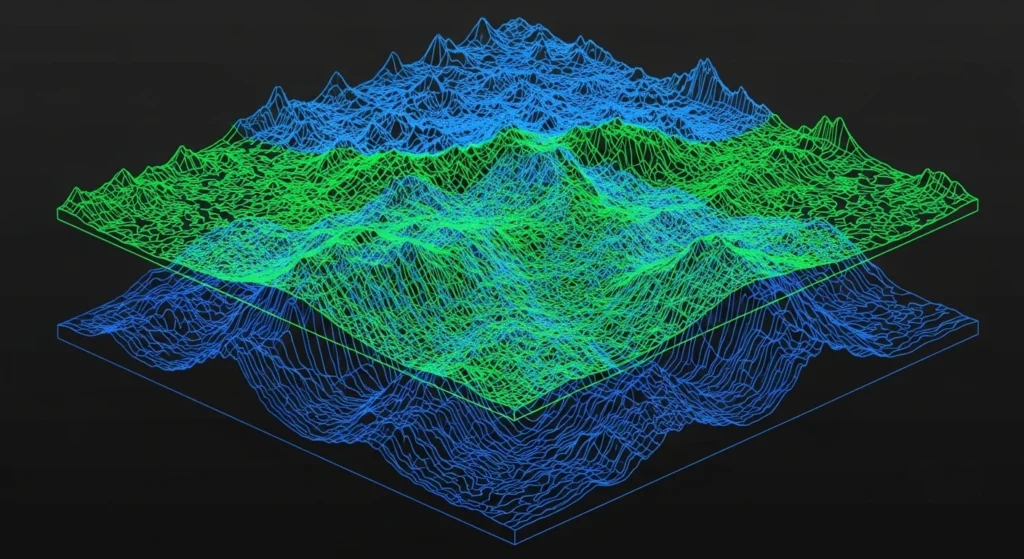

I’ve worked with these systems enough to know the devil’s in the layering. You don’t just run one noise function and call it done. You stack multiple layers called octaves at different scales. One layer creates mountain ranges, another adds rolling hills, and a third introduces small rocky outcrops. Each layer adds detail at a different scale, much like how real geology works through tectonic forces, erosion, and weathering at different timescales.

More recent approaches incorporate machine learning. Developers train neural networks on real topographical data from places like the Himalayas or the Grand Canyon. The network learns what makes terrain look “real,” how rivers carve valleys, how erosion patterns work, and how plateaus form. When generating new terrain, it applies these learned patterns to create landscapes that feel geologically plausible even though they never existed.

Real World Applications: Where We See This Technology

No Man’s Sky represents perhaps the most ambitious implementation of 18 quintillion planets, each with unique terrain, generated from a single seed number using deterministic algorithms. Every player who visits the same coordinates sees identical terrain because the algorithm produces the same output from the same input. It’s mathematically impressive, though early versions struggled with repetitiveness, a common pitfall when your generation rules aren’t complex enough.

Red Dead Redemption 2 took a hybrid approach that I find more interesting from a design perspective. Rockstar’s artists crafted the overall landscape by hand, ensuring specific vistas and gameplay opportunities existed exactly where they wanted them. But they used procedural scattering algorithms for vegetation placement, rock distribution, and ground detail. This gives them artistic control where it matters while letting algorithms handle the tedious placement of thousands of individual plants and stones.

Battle royale games like Fortnite and PUBG use procedural techniques differently, not for creating entirely new maps, but for adding variation to existing ones. Terrain might be handcrafted, but loot placement, storm patterns, and environmental details often use randomization to keep matches fresh.

The Challenges Nobody Talks About Enough

Generating terrain is one thing. Generating playable, fun terrain is entirely different.

I’ve seen countless student projects where the algorithm creates stunning mountain vistas that are completely unclimbable, or gorgeous forests so dense you can’t walk through them. The terrain might be mathematically interesting, but practically useless for gameplay.

The solution usually involves constraint systems rules that override pure generation when needed. Rivers need to flow downhill. You’d think this is obvious, but early algorithms frequently created rivers that flowed upward. Paths between objectives must exist. Slopes can’t exceed certain angles in areas where players need to walk.

Performance is another beast entirely. Generating detailed terrain is computationally expensive. Games typically use level of detail systems, generating high resolution terrain near the player while using simpler versions for distant areas. The chunk-based generation in Minecraft loads terrain as you explore, unloading distant areas to manage memory smartly, but it creates those annoying loading pauses when you move quickly.

The Machine Learning Revolution (And Its Limitations)

Recent developments in neural networks have opened fascinating possibilities. Researchers have trained models that can transform simple sketches into detailed terrain or convert satellite imagery into game ready landscapes. NVIDIA’s GauGAN now Canvas Demonstrates this: you paint rough regions labeled “mountain,” “water,” “forest,” and the AI generates photorealistic terrain matching your layout.

For game development, this could mean artists working at a much higher level of abstraction, describing what they want rather than manually sculpting every detail.

But machine learning brings complications. Training data requirements are substantial. The results can be unpredictable, sometimes brilliant, sometimes bizarre. And there’s the “black box” problem: when an algorithm does something wrong, figuring out why and fixing it is much harder than with traditional procedural methods, where you can trace exactly which rule produced what result.

Most studios I’m aware of use ML as a tool in the pipeline, not a replacement for it. An artist might generate several ML variations, pick the best parts from each, then refine manually.

Where This Technology Is Heading

The next frontier is context awareness. Current systems often treat terrain generation separately from narrative and gameplay needs. Future systems will likely integrate these elements, understanding that a bandit camp needs defendable high ground nearby, or that a quest location needs a distinctive landmark players can navigate toward.

We’re also seeing more real time adaptation. Imagine terrain that subtly changes based on player actions, paths forming where players frequently walk, and vegetation growing back in abandoned areas. Some survival games already do primitive versions of this, but the computational cost limits how sophisticated it can be.

Cloud computing might change the equation. If generation happens on remote servers rather than local hardware, far more complex algorithms become viable. Microsoft’s Flight Simulator already does this, streaming terrain generated from satellite data as you fly.

The Creative Paradox

Here’s something I’ve thought about a lot: does infinite procedural content actually make games better?

Sometimes yes the exploration in games like The Elder Scrolls: Daggerfall or Dwarf Fortress benefits from vast, unpredictable worlds. But many of my most memorable gaming moments happened in carefully handcrafted spaces where every element served a purpose. The vista approaching Anor Londo in Dark Souls wouldn’t hit the same if it were a randomly generated variant #47,832.

The sweet spot seems to be hybrid approaches that combine algorithmic efficiency with human intentionality. Let algorithms handle scale and variation, but keep human designers in control of the experiences that matter most.

AI terrain generation isn’t replacing environment artists; it’s changing what they spend time on, shifting them from manual labor to creative direction and refinement. That seems like a worthwhile trade.

FAQs

Q: Is the terrain in games like Minecraft truly infinite?

A: Technically limited by computational constraints, but practically endless, you’d never explore it all in a lifetime.

Q: Can AI terrain generation work for realistic games?

A: Yes, though it requires more sophisticated rules and often hybrid approaches combining generation with manual refinement.

Q: Does procedural terrain generation reduce game file sizes?

A: Yes, significantly. You’re storing algorithms rather than asset data, though high resolution textures still take considerable space.

Q: How long does it take to generate terrain using these methods?

A: Depends on complexity, simple terrain generates in seconds; detailed, large scale environments might take hours of processing.

Q: Will this technology eliminate environmental artist jobs?

A: Unlikely. It shifts their role toward creative direction, quality control, and handling aspects requiring human aesthetic judgment.