I’ve been playing competitive multiplayer games for over fifteen years now, and I’ve watched the evolution of how gaming communities police themselves. The shift from simple report buttons to sophisticated AI driven reputation systems has been nothing short of remarkable, though not without its share of headaches.

What Are We Really Talking About Here?

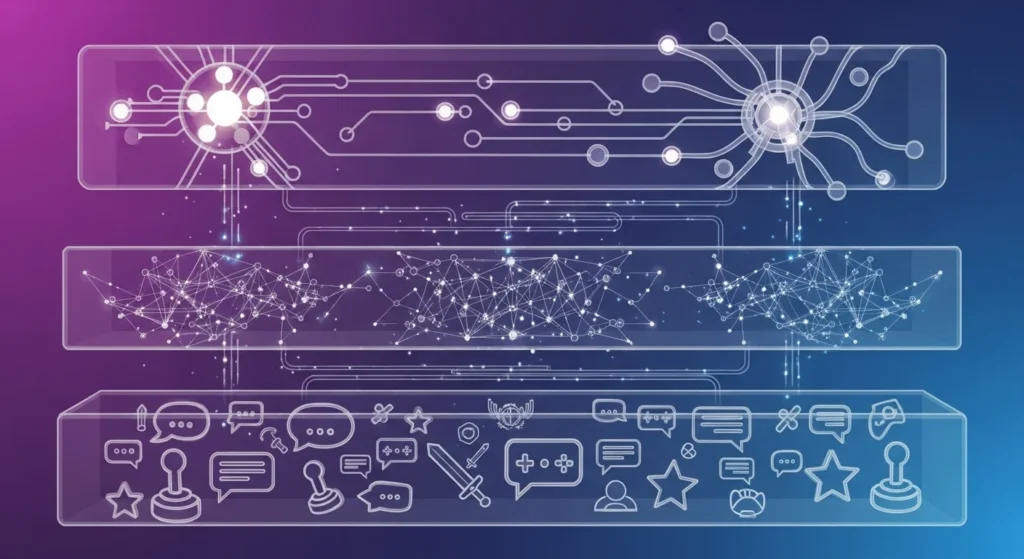

When we discuss AI reputation systems in multiplayer games, we’re talking about automated frameworks that track player behavior, assign scores or rankings based on conduct, and sometimes take action without human intervention. These aren’t just simple tallies of reports. Modern systems analyze communication patterns, gameplay behavior, team cooperation metrics, and even mouse movement patterns to build comprehensive profiles of how players interact with their communities.

Think of it like a credit score, but for gaming behavior. Just as your financial credit score follows you from bank to bank, your reputation in many modern games influences everything from who you’re matched with to whether you can access certain features.

How These Systems Actually Work

From my observations and conversations with developers at various gaming conferences, most AI reputation systems operate on multiple layers. The first layer is data collection of every chat message, every in game action, and every disconnect is logged. Games like League of Legends and Dota 2 have been doing this for years.

The second layer involves pattern recognition. The AI doesn’t just look at isolated incidents. It examines context. Did you abandon a match once in 500 games, or do you regularly quit when your team falls behind? Did you type something inflammatory after being harassed for ten minutes, or did you start the conflict?

I remember when Riot Games introduced their behavioral systems in League of Legends around 2015. Players could suddenly see reform cards explaining exactly what triggered penalties. The transparency was refreshing, though the system wasn’t perfect and still isn’t.

The Good, The Bad, and The Falsely Banned

The primary benefit is obvious: these systems scale in ways human moderation simply can’t. With games like Fortnite hosting millions of concurrent players, you’d need a small city’s worth of moderators to review every toxic incident manually. AI handles the volume.

I’ve also noticed that reputation systems create interesting behavioral incentives. In Overwatch, the endorsement system encouraged players to be more communicative and cooperative by rewarding positive behavior. Queue times shortened for players with good standing. The system essentially gamified being a decent human being.

But here’s where things get messy. False positives happen more often than most companies publicly admit. I personally know players who received temporary bans for unusual playstyles that the AI interpreted as griefing or throwing matches. One friend who exclusively played an off meta strategy in Rainbow Six Siege got flagged because the system couldn’t differentiate between unconventional tactics and deliberate sabotage.

The context problem remains AI’s biggest weakness. These systems struggle with sarcasm, friendly banter between premade groups, and cultural differences in communication styles. A phrase that’s clearly joking among friends can look toxic to an algorithm scanning text without understanding relationships.

Real World Implementation Challenges

CS: GO’s Trust Factor system demonstrates both the potential and limitations of this technology. Valve remains deliberately vague about exactly what factors influence your Trust score, which prevents gaming the system but also frustrates players who don’t understand why they’re suddenly matched with toxic teammates.

I’ve tracked my own Trust Factor informally by noting match quality and teammate behavior patterns. After maintaining clean records for months, I noticed significantly better teammates and fewer blatant cheaters. But when I introduced a new player friend to the game, our combined Trust Factor apparently tanked, resulting in miserable matches filled with smurfs and rage quitters.

The opacity creates problems. Without understanding the metrics, players can’t improve their standing except through vague be nice and play normally advice. It’s like being told your credit score dropped without seeing which factors contributed.

The Privacy Question Nobody Wants to Address

Here’s something that keeps me up at night: these systems require massive data collection. Every word you type, every action you take, and potentially even voice communications get processed and stored. Most players click through the terms of service without considering what they’re consenting to.

Valorant’s Vanguard anti cheat sparked controversy partly because of its kernel level access, but reputation systems quietly collect arguably more personal information about behavior patterns and communication. We’re trading privacy for safer gaming environments, often without fully understanding the exchange.

Where This Technology Heading

Machine learning models are getting better at understanding context. I’ve seen demonstrations of systems that can identify coordinated harassment campaigns, distinguish between frustration and toxicity, and even detect early warning signs of radicalization in gaming communities.

Some developers are experimenting with rehabilitation focused systems rather than purely punitive ones. Instead of just banning toxic players, these systems attempt to educate and reform behavior through mandatory tutorials, restricted communication until demonstrated improvement, and gradual privilege restoration.

The next frontier seems to be voice communication analysis. Text is relatively easy for AI to parse, but voice introduces accent, tone, emotion, and sarcasm. Early attempts have been clunky, but companies are investing heavily in this space.

What Players Should Know

If you’re serious about multiplayer gaming, understanding these systems matters. Your reputation follows you, sometimes across multiple games from the same publisher. A permanent ban in one game might affect your standing in others.

My advice? Assume everything is logged. Don’t say anything you wouldn’t want attached to your account permanently. Use mute functions liberally rather than engaging with trolls. And if you receive a penalty you believe is unjust, document everything and appeal through proper channels. Automated systems do get overturned by human review.

The Bottom Line

AI reputation systems aren’t perfect, but they’re probably necessary at the current scale of online gaming. The alternative, unmoderated chaos or impossibly expensive human moderation, is worse. The technology will continue improving, becoming better at understanding context and nuance.

What concerns me is the lack of standardization and transparency. Every company implements these systems differently with varying levels of player visibility. We need industry-wide conversations about best practices, appeals processes, and data handling.

For now, these silent guardians work in the background, shaping our gaming experiences in ways most players never consciously notice. They’re imperfect gatekeepers, but they’re the gatekeepers we have.

FAQs

What exactly is a reputation system in gaming?

It’s an automated system that tracks player behavior and assigns scores or consequences based on actions like toxicity, teamwork, and fair play.

Can AI reputation systems make mistakes?

Yes. False positives happen, especially with unusual playstyles or context dependent communication that algorithms misinterpret.

How can I improve my reputation score?

Play consistently, communicate respectfully, avoid abandoning matches, and report only genuine violations rather than frustration-based reports.

Do these systems track everything I say?

Most modern systems log chat and increasingly voice communication, though specifics vary by game and region.

Can I see my reputation score?

Some games show explicit scores and Overwatch endorsements, while others keep them hidden CS: GO Trust Factor. It varies considerably by title.

What happens if I’m falsely banned?

Contact player support with documentation. While slow, human review can overturn automated decisions when evidence supports your case.