I’ve spent years watching online communities struggle with the same persistent problem: players who deliberately ruin other people’s experiences. We call them griefers, and if you’ve ever played an online game, you’ve probably encountered them. The teammate who intentionally feeds kills to the enemy. The builder who destroys your hours of work. The player who blocks doorways just to watch you squirm.

For the longest time, dealing with griefers meant relying on player reports and human moderators trying to review mountains of footage and chat logs. It was exhausting, slow, and frankly, we couldn’t keep up. That’s where artificial intelligence entered the picture, and honestly, it’s changed the game, though not in the magical, solve everything way you might think.

Understanding the Griefing Problem

Before diving into detection methods, let’s be clear about what we’re dealing with. Griefing isn’t just being bad at a game. It’s the intentional disruption of gameplay for others, often for the griefer’s own amusement. I’ve seen it take countless forms: team killing in tactical shooters, stream sniping popular content creators, blocking progress in MMORPGs, or spamming voice chat with noise.

The tricky part? Sometimes the line between legitimate strategy and griefing gets blurry. Is spawn camping griefing or just aggressive tactics? That’s where things get complicated, and why automated systems can’t work alone.

How AI Detection Actually Works

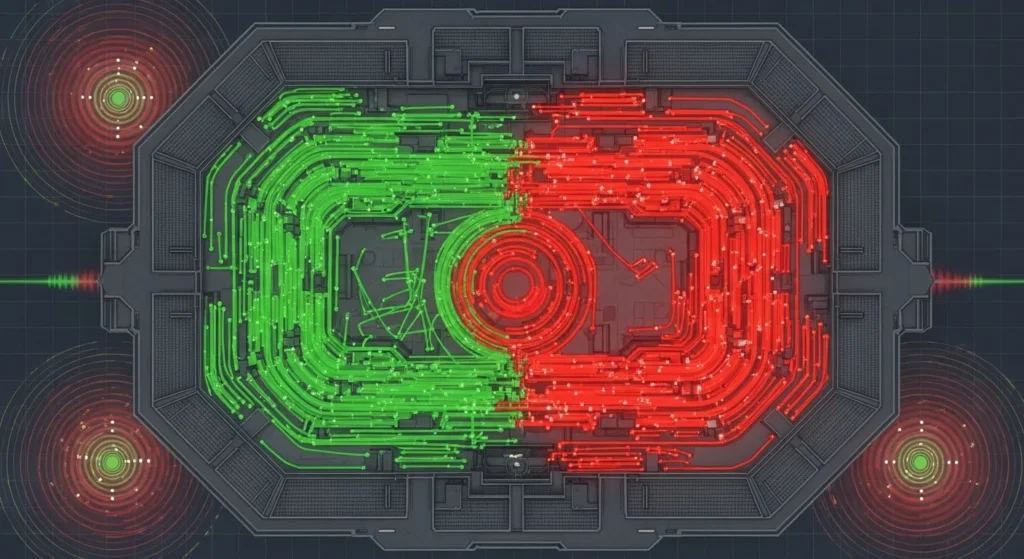

Modern griefing detection systems don’t rely on a single magical algorithm. Instead, they combine multiple approaches that look for patterns humans might miss or couldn’t possibly monitor at scale.

Behavioral pattern recognition is the backbone. These systems establish baselines for normal player behavior, then flag deviations. In a competitive shooter, for instance, the system tracks damage dealt to teammates versus enemies. A player who consistently shoots teammates in the back isn’t just having bad aim, they’re exhibiting a pattern.

I worked with one development team that implemented a system tracking player movement patterns. Griefers often exhibit distinctive behaviors: repeatedly running into the same area to die, blocking specific chokepoints for extended periods, or following other players around without participating in objectives. The AI learned to recognize these movement signatures.

Natural language processing handles text and voice chat. This goes beyond simple keyword filtering, which griefers learned to game years ago. Modern systems understand context, detect hate speech variations, and even catch coded language that toxic communities develop to evade basic filters. Though I’ll be honest, this remains one of the hardest challenges because human language is endlessly creative, especially when people are trying to be terrible.

Network analysis looks at social connections and reporting patterns. If a player receives reports from many different people across multiple matches, that’s significant. But the systems also weigh these reports if the same group always reports together, which might indicate coordinated false reporting rather than legitimate complaints.

Real World Applications and Results

Riot Games’ approach to League of Legends provides a solid case study. Their systems analyze millions of matches, looking for intentional feeding, deliberately dying to help opponents, AFKing going idle, and toxic chat behavior. What impressed me was their transparency; they published data showing the system reduced toxic behavior by significant margins when combined with player reform systems.

Counter Strike has tackled a different challenge: catching griefers in community servers where traditional competitive rules don’t apply. The system had to learn the difference between casual gameplay and actual griefing in environments where “normal” behavior varies wildly. This required training on server-specific data and allowing community administrators to tune sensitivity.

Minecraft servers, especially larger networks, face unique griefing challenges because the destructive potential is so high. One AI assisted system I encountered tracked block placement and destruction patterns. Building a house? Normal. Destroying 500 blocks of someone else’s build in three minutes? Definitely not. The system automatically rolled back damage and flagged accounts.

The Limitations Nobody Talks About

Here’s what the promotional materials won’t tell you: these systems make mistakes. I’ve seen false positives punish legitimate players, and false negatives let obvious griefers slip through.

A particularly frustrating case involved a skilled player who genuinely tried unorthodox strategies. The system flagged their unusual movement patterns and off meta gameplay as potential griefing. It took human review to recognize this was innovation, not disruption. This happens more often than developers admit.

Context remains incredibly difficult for algorithms. A player’s trash talking might be bantering with friends or harassing strangers; the words look identical, but the intent differs completely. Voice chat adds another layer because sarcasm, jokes, and genuine toxicity can sound remarkably similar.

Then there’s the arms race. Griefers adapt. When systems catch obvious team killing, griefers find subtler methods like using game mechanics in technically legal but disruptive ways. They’ll block spawns without standing in specific forbidden zones, or grief in ways that look like incompetence rather than malice.

Privacy and Ethical Considerations

Monitoring player behavior this closely raises legitimate questions. These systems analyze everything: your chat, your voice, your gameplay patterns, and even your social networks within games. Where’s the line between safety and surveillance?

I’ve sat in uncomfortable meetings where we debated how much data to collect and retain. Yes, we need evidence for appeals and system improvement. But indefinitely storing voice recordings and chat logs? That feels invasive, and security breaches happen.

There’s also the question of transparency. Should players know exactly how detection works? Full disclosure helps trust, but also helps griefers game the system. Most companies settle for explaining general principles without revealing specific thresholds, a compromise that satisfies nobody completely.

Bias is another concern I don’t see discussed enough. If training data comes primarily from one game mode, region, or player demographic, the system might not recognize griefing in other contexts. Worse, it might falsely flag legitimate cultural differences in communication or play styles.

The Human Element Remains Essential

Despite all this technology, human moderators remain irreplaceable. AI detects patterns and flags possibilities, but humans make final judgment calls on edge cases, handle appeals, and catch novel griefing methods the system hasn’t learned yet.

The best implementations I’ve seen use AI as a force multiplier, handling obvious cases automatically while directing human attention to complex situations. This hybrid approach processes vastly more reports than humans alone while maintaining the judgment and context-sensitivity that algorithms lack.

Looking Forward

Detection systems continue improving. Machine learning models trained on years of data can now spot subtle griefing patterns I wouldn’t have noticed after reviewing the same footage. Cross game data sharing, anonymized and consensual, helps systems recognize griefers who migrate between games.

Voice analysis is getting sophisticated enough to detect not just words but tone and intent. Movement prediction can anticipate griefing before it happens, allowing preventive intervention rather than just punishment.

But technology alone won’t solve this. The most effective anti griefing strategies combine detection with community building, clear behavioral standards, reform systems for first time offenders, and yes, permanent bans for serial griefers who won’t change.

Final Thoughts

AI griefing detection represents significant progress in protecting online communities, but it’s a tool, not a silver bullet. The systems work best when developers stay transparent about limitations, players understand what’s being monitored and why, and human oversight prevents algorithmic overreach.

Having watched this technology evolve from crude keyword filters to sophisticated behavioral analysis, I’m optimistic about the future. Online games are becoming more welcoming places. But they’re also reminding us that human problems, such as toxicity, harassment, and deliberate cruelty, can’t be entirely solved with technical solutions. Sometimes you just need a good moderator who understands the difference between a bad play and a bad actor.

FAQs

What exactly counts as griefing in online games?

Griefing is intentionally disrupting other players’ experiences through actions like team killing, destroying their creations, blocking progress, or harassment. It’s distinguished from poor play by deliberate intent to annoy or harm others’ enjoyment.

Can AI completely replace human moderators?

No. AI excels at detecting patterns and handling obvious cases at scale, but humans remain essential for context, edge cases, appeals, and situations requiring nuanced judgment that algorithms can’t reliably provide.

How accurate are AI griefing detection systems?

Accuracy varies by implementation and context, but modern systems typically achieve 85-95% accuracy on clear cut cases. Ambiguous situations have much higher error rates, which is why human review remains important.

Do these systems violate player privacy?

They monitor behavior within games, which most terms of service allow, but they raise legitimate privacy questions about data collection, storage, and use. Policies vary significantly between games and platforms.

Can griefers trick AI detection systems?

Yes, to some extent. Griefers adapt their methods to avoid obvious patterns, which is why detection systems require constant updates and why the “arms race” between griefers and moderators continues.

What happens when someone is falsely accused?

Most systems include appeals processes where humans review flagged behavior. False positives do occur, which is why responsible implementations use escalating consequences rather than immediate permanent bans for first offenses.