I still remember the first time I truly noticed spatial audio in a game. It was during a tense moment in a survival horror title, and I heard footsteps approaching from behind and slightly to my left. The sound wasn’t just “there,” it had weight, distance, and directionality that made me physically turn around. That moment made me realize how much we’d been missing in gaming audio. Now, with AI stepping into the spatial audio space, we’re entering an entirely new era where sound doesn’t just react to our environment, it intelligently adapts to it.

Beyond Simple Positional Audio

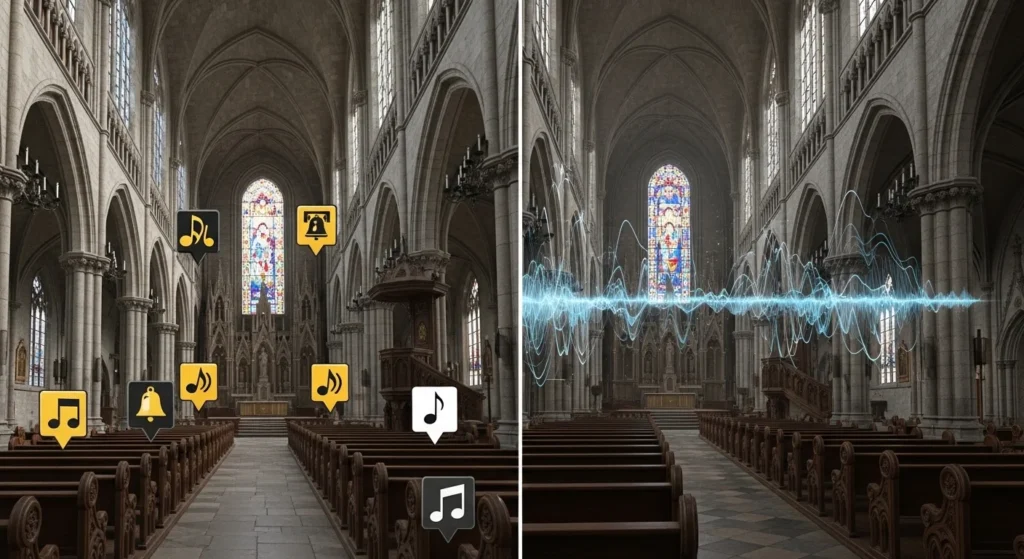

Traditional spatial audio in games has been around for years. Developers would manually place sound sources in a 3D space, and the audio engine would calculate how those sounds reached the player based on distance and direction. It worked, but it was fundamentally static. A gunshot in a cathedral sounded the same whether you were near stone walls or wooden pews.

AI spatial audio intelligence changes this equation completely. Instead of relying solely on pre-programmed rules and manually placed audio cues, these systems analyze the game environment in real time, understanding geometry, materials, and even player behavior to create audio that feels genuinely alive and responsive.

The difference is comparable to the jump from pre baked lighting to real time ray tracing. Suddenly, we’re not just hearing sounds positioned in space; we’re hearing them interact with that space in ways that mirror reality.

How AI Makes Sound Smarter

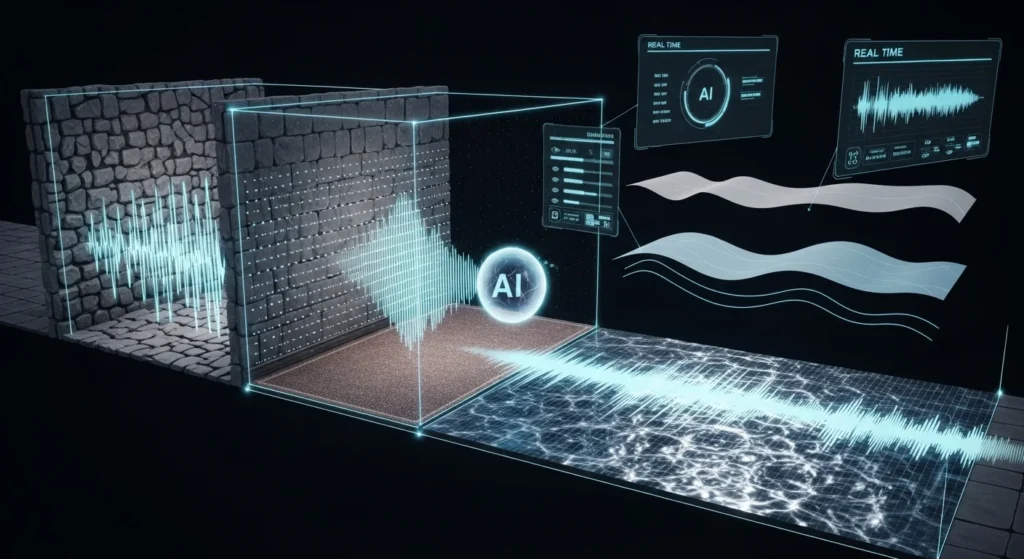

At its core, AI spatial audio intelligence uses machine learning models trained on real world acoustic data. These systems have been fed thousands of hours of recordings showing how sound behaves in different environments: how it echoes in a parking garage, damps in a carpeted room, or reflects off water surfaces.

When you’re playing a game with this technology, the AI constantly analyzes what’s around you. It recognizes that you’ve walked from a stone corridor into a large open hall and adjusts the reverb and echo accordingly. If there’s a cloth banner nearby, the system understands that fabric absorbs high frequencies differently from how stone reflects them.

Some implementations go even further. They track occlusion dynamically, meaning if an enemy is shouting at you but there’s a concrete wall between you, the AI doesn’t just lower the volume. It actually filters the sound to emphasize lower frequencies that would realistically pass through concrete while muffling the highs. Move around that wall, and the sound profile shifts in real time.

I’ve tested builds where the AI even considers weather conditions and environmental factors. Rain doesn’t just add ambient noise; it also affects how distant sounds reach you, dampening them realistically based on the intensity of the downpour.

Real World Applications That Matter

The most immediate benefit shows up in competitive multiplayer games. In titles like tactical shooters, audio cues can mean the difference between victory and elimination. When AI driven spatial audio accurately represents footsteps on different surfaces, such as metal grates, wooden floors, and concrete, it adds a layer of tactical information that skilled players can leverage.

But it’s not just about competitive advantage. Horror games benefit enormously from intelligent audio. When the system can dynamically adjust creaking sounds, breathing, and environmental audio based on your exact position and the specific geometry around you, the immersion becomes visceral. The technology understands that terror works differently in a cramped air duct versus an expansive abandoned warehouse.

Open world games present another fascinating use case. Imagine riding through a city where the AI adjusts the entire soundscape based on building density, traffic patterns, and even time of day. The system recognizes that sound behaves differently in narrow alleys with tall buildings versus open plazas, and it adjusts everything from music to ambient noise to dialogue accordingly.

The Technical Reality and Challenges

Implementing AI spatial audio isn’t just a matter of flipping a switch. It’s computationally expensive, which is why we’re only now seeing it become feasible in consumer hardware. Modern gaming systems need to balance CPU and GPU loads, and adding sophisticated real time audio processing to the mix requires careful optimization.

There’s also the question of authoring and control. Game audio designers have spent decades perfecting their craft with traditional tools. When you introduce AI that makes autonomous decisions about how audio should sound, you’re asking these professionals to partially surrender creative control. The best implementations I’ve seen strike a balance the AI handles the technical heavy lifting of acoustic simulation. At the same time, designers maintain artistic control over the emotional and narrative aspects of sound.

Integration with existing game engines presents another hurdle. Audio middleware has traditionally been separate from visual rendering pipelines. Still, AI spatial audio works best when it has access to the same geometric and material data that graphics engines use. This requires deeper integration and, in some cases, substantial refactoring of how games are built.

What This Means for Players

From a player’s perspective, the benefits extend beyond just “better sound.” Well implemented AI spatial audio reduces cognitive load. Your brain doesn’t have to work as hard to parse audio information because it arrives in a more natural, expected way. This means you can stay immersed longer without fatigue.

Accessibility also improves significantly for players who rely heavily on audio cues, whether by preference or necessity. More accurate, informative spatial audio creates a more equitable playing field. When sound accurately conveys what’s happening around you, it becomes a powerful tool for everyone.

The technology also has potential for social gaming experiences. Voice chat that properly accounts for spatial positioning and environmental acoustics makes multiplayer interactions feel more natural and less like everyone’s shouting directly into your ear from an undefined void.

Looking Forward

We’re still in the early stages of AI spatial audio intelligence. Current implementations are impressive but represent just the beginning. As machine learning models become more sophisticated and hardware continues to advance, we’ll likely see audio that adapts not just to environments but also to player behavior and emotional states.

Imagine a game that notices you’re struggling with a section and subtly adjusts audio cues to be more helpful, or one that recognizes you’re deeply engaged in exploration and shifts the soundscape to support that contemplative mood. These aren’t far fetched ideas, they’re logical next steps.

The convergence of AI audio, haptics, and adaptive music systems could create entertainment experiences in which sound isn’t just one element of immersion but the primary driver. We might see games designed audio first, where visual elements support the sonic experience rather than the other way around.

What excites me most is the innovation potential we haven’t imagined yet. Every technological leap in gaming has enabled creative possibilities that weren’t obvious at first. AI spatial audio intelligence is providing tools that will let developers craft experiences we don’t yet have language to describe.

The sound of gaming is getting smarter, and honestly, that might be the most important sensory upgrade we’ve had in years.

FAQs

What is AI spatial audio in gaming?

AI spatial audio uses machine learning to create realistic, dynamic 3D sound that adapts to game environments, materials, and the player’s position in real time, rather than relying on static, preprogrammed audio positioning.

Does AI spatial audio require special hardware?

Most modern gaming systems can support AI spatial audio, though performance varies. High end PCs, PlayStation 5, and Xbox Series X|S have dedicated audio processing that makes implementation more effective.

Can I experience AI spatial audio with regular headphones?

Yes. While specialized headphones enhance the experience, AI spatial audio works with standard stereo headphones by using binaural processing to create the illusion of 3D sound.

Which games currently use AI spatial audio?

While specific implementations vary, several AAA titles and forward thinking indie games are integrating these technologies. The adoption is growing rapidly as development tools become more accessible.

Does AI spatial audio impact game performance?

It can, but modern implementations are optimized to minimize performance impact. The processing often runs on dedicated audio hardware or efficiently shares resources with other game systems.