There’s something oddly satisfying about watching a character tumble down a flight of stairs in a video game. Maybe that sounds morbid, but anyone who’s spent hours in games like Grand Theft Auto or Hitman knows exactly what I’m talking about. Those ragdoll physics moments where characters flop, roll, and collapse in unpredictable ways have become a beloved if sometimes hilarious part of modern gaming.

But here’s the thing: traditional ragdoll physics, while entertaining, often looks awkward. Characters flail unnaturally, limbs bend in impossible directions, and bodies sometimes bounce around like they’re made of rubber. That’s where AI based ragdoll physics enters the picture, and honestly, it’s changing everything we thought we knew about character animation.

Understanding Traditional Ragdoll Physics

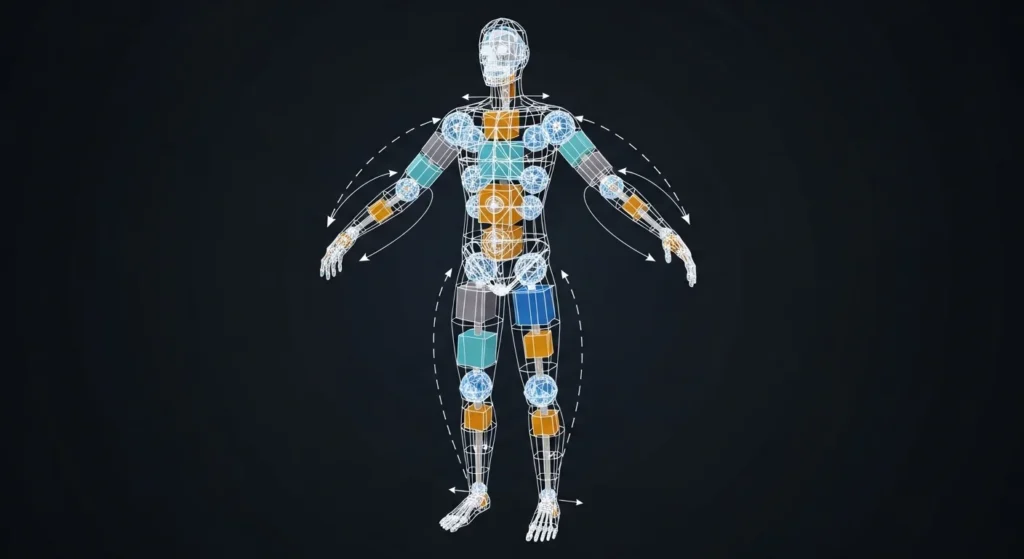

Before diving into the AI stuff, let’s quickly cover the basics. Traditional ragdoll physics treats a character’s body as a collection of rigid bodies connected by joints. When a character dies, gets knocked down, or loses control, the game engine hands control over to the physics simulation. The result? A limp body that reacts to gravity, collisions, and environmental forces.

The problem is that these systems don’t understand human biomechanics. A real person falling down stairs will instinctively brace themselves, extend their arms, and try to protect their head. Traditional ragdolls just fall. They’re passive objects with no sense of self preservation or natural movement patterns.

I remember playing a game back in the early 2010s where enemies would collapse identically regardless of how they were hit. Shot in the chest? Same falling animation. Pushed off a ledge? Same limp tumble. It broke immersion constantly.

How AI Transforms Ragdoll Systems

AI based ragdoll physics fundamentally changes this equation. Instead of treating bodies as simple physics objects, these systems use machine learning specifically, deep reinforcement learning to train virtual characters to move like actual humans or creatures.

The basic concept works like this: researchers train neural networks by having simulated characters learn to balance, walk, fall, and recover through millions of trial and error attempts. The AI essentially “learns” what realistic movement looks like by being rewarded for natural-looking motions and penalized for unnatural ones.

What emerges is genuinely remarkable. Characters don’t just fall they stumble. They reach out to grab ledges. They try to regain balance. They protect their heads during impacts. The movements feel alive because the AI has essentially learned the same lessons our own bodies learn through years of real world experience.

Real World Examples in Modern Games

Several studios have implemented varying degrees of AI driven physics with impressive results.

Rockstar Games pioneered this territory with their Euphoria engine, first showcased in Grand Theft Auto IV. While not purely AI based by today’s standards, Euphoria introduced behavioral responses to ragdoll states. Characters would try to break falls, push themselves up from the ground, and react contextually to being shot or hit.

More recently, games have pushed further. Titles like Control from Remedy Entertainment blend physics simulation with procedural animation in ways that feel eerily natural. Enemies react to being thrown, twisted, and slammed with physics that considers momentum, impact angles, and environmental interactions simultaneously.

The upcoming generation of games promises even more sophisticated implementations. Developers at various studios have been experimenting with deep learning models that can generate entirely new animations on the fly, movements the game designers never explicitly programmed.

Technical Challenges and Limitations

Let’s be honest:

AI ragdoll physics isn’t a magic solution. There are significant hurdles developers face when implementing these systems.

Computational Cost:

Running neural network inference for physics calculations demands serious processing power. While a single character might be manageable, imagine dozens of NPCs all requiring simultaneous AI driven physics. That’s why many games still use hybrid approaches, reserving AI physics for key moments or important characters.

Training Complexity:

Creating these systems requires expertise in both game development and machine learning skill sets that don’t always overlap. Studios need specialized talent to build, train, and optimize these neural networks.

Consistency vs. Realism:

Sometimes, realistic physics creates gameplay problems. If every fall required a realistic recovery time, games would feel sluggish. Developers must balance physical accuracy against playability, which often means dialing back the realism.

Integration Difficulties:

Blending AI driven ragdoll states with traditional keyframe animation isn’t trivial. The transitions between controlled movement and physics-based reactions need careful handling to avoid jarring switches.

The Future Looks Promising

Despite these challenges, I’m genuinely excited about where this technology is heading. Academic research in this field has accelerated dramatically over the past few years. Papers from institutions like DeepMind and various university labs have demonstrated virtual characters that can walk, run, jump, and recover from pushes with startling naturalism.

What gets me most excited is the potential for emergent behavior situations where characters do things developers never explicitly designed. A character that instinctively crawls toward cover when injured. NPCs that help each other stand up after explosions. These possibilities open up entirely new dimensions of immersive storytelling and gameplay.

We’re also seeing improved tools that make these systems more accessible to smaller studios. Middleware solutions are emerging that package AI physics capabilities in ways that don’t require a dedicated machine learning team to implement.

Final Thoughts

AI-based ragdoll physics represents one of those technological shifts that might seem incremental from the outside but actually transforms player experience fundamentally. When characters move naturally when they feel like physical beings with weight, momentum, and survival instincts, games become dramatically more immersive.

Sure, we’ll always have a soft spot for those glitchy ragdoll moments where characters launch into orbit after bumping into a barrel. But the future of game physics is intelligent, adaptive, and increasingly indistinguishable from reality. And that’s pretty incredible to witness.

Frequently Asked Questions

What exactly is ragdoll physics in gaming?

Ragdoll physics simulates limp body behavior by treating characters as connected rigid bodies that respond to gravity, collisions, and forces when they lose control or die.

How does AI improve ragdoll physics?

AI enables characters to react naturally to situations, attempting to balance, breaking falls, and protecting themselves rather than simply collapsing like passive objects.

Which games use AI based ragdoll physics?

Notable examples include games using Rockstar’s Euphoria engine (GTA IV, Red Dead Redemption), Control, and various upcoming titles incorporating deep learning physics.

Is AI ragdoll physics computationally expensive?

Yes, running neural networks for physics calculations requires significant processing power, which is why many games use hybrid approaches or limit AI physics to specific scenarios.

Will AI ragdoll physics replace traditional animation?

Not entirely. Most games will blend hand crafted animations with AI-driven physics, using each approach where it works best for gameplay and performance.

Can indie developers use AI ragdoll physics?

Middleware solutions are emerging that make these systems more accessible, though implementation still requires more technical expertise than traditional ragdoll systems.